Hi Daniel,

Yes it seems like there should be a smarter way. At the very least, Pix4D should make it possible to delete or disable these obviously erroneous ATPs prior to densification so that little to no cleaning is required of the point cloud (which is based on the ATPs). This would also improve other subsequent objects that are ultimately based on ATP quality, such as the mesh (which is based on the point cloud) and I believe also the orthomosaic (which is partially based on the texturing of the mesh).

I firmly believe the root to the issue is the computation of the ATP from cameras that are spatially close together. The vertical estimation of the point in the cameras’ frames is extremely sensitive to small errors in camera horizontal positioning. A more robust estimate of the 3D location of an ATP can be made from camera’s with a greater baseline/separation.

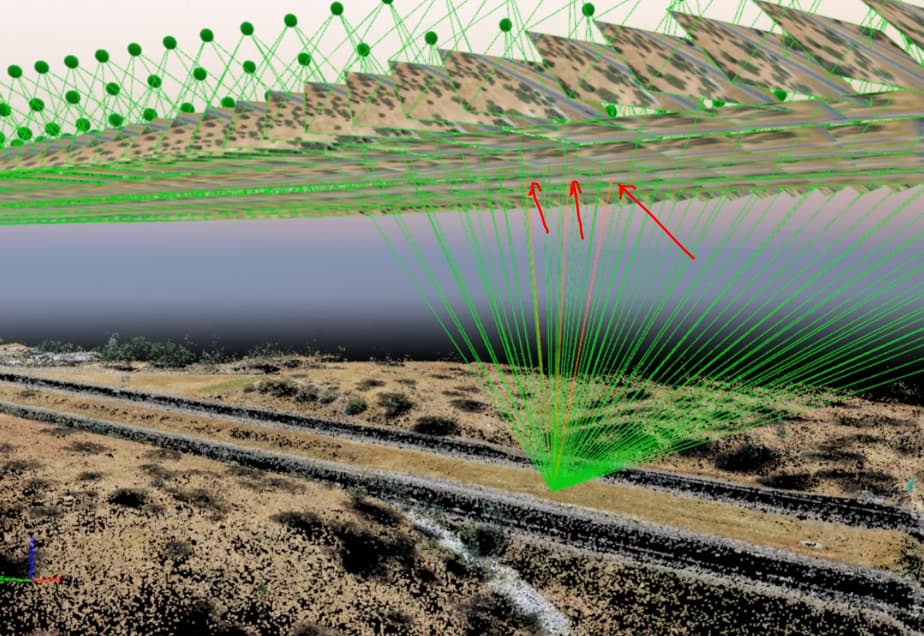

Take another example:

The obviously erroneous ATP is indicated with the red arrow. It was found from 3 images that were taken spatially very close to one another. From more distant cameras, we see that the reprojection error is massive (black arrows). By all Pix4D metrics, however, it thinks it did a great job with the ATP (blue arrows). I believe those error metrics are based only on the 3 marked images and don’t take into account the reprojection error of the images with the black arrows. I suppose the feature of interest was not automatically identified in those photos, so Pix4D couldn’t calculate the reprojection error anyway and it has no idea how far off it truly is.

So Pix4D can only recognize the feature commonly in the 3 neighboring photos. If that is the case, then I need a setting that forces Pix4D to either use more than 3 photos for ATP computation, or calculate ATPs using cameras that are spatially separated by an acceptable amount. The flight was flown in an Aerial Grid track, so the default settings should’ve been acceptable:

I am interested in the “Use Distance” option, which is unchecked for an aerial grid flight (I would want to know the theoretical justification for this). The documentation on this option is vague and unclear: https://support.pix4d.com/hc/en-us/articles/205433155-Menu-Process-Processing-Options-1-Initial-Processing-Matching

I quote:

" Use Distance: Only available if the images have geolocation. It is useful for oblique or terrestrial projects. Each image is matched with images within a relative distance.

-

Relative Distance Between Consecutive Images: It allows the user to set the relative distance. "

My images have geolocation. I don’t care to be told when it is useful. I want to understand what this option does. “Each image is matched with images within a relative distance” is unclear for me. It almost sounds like this option specifies and upper limit on the distance between matched features between images, whereas I actually want to specify a lower limit.

And finally “It allows the user to set the relative distance” for the description of “Relative Distance Between Consecutive Images” is basically restating the setting.

Again, the root to the problem is in the extremely sensitive triangulation due to closely separated cameras. I just need a setting to override that.